Making VMs from the Inside Out

... originally titled "Making VMs the Borg Way"... which kind of makes sense for the beginning of the process; "stuff continues running unchanged internally" is really not how they do things though.

I presume most VMs are created by launching an empty one with an installer ISO and installing it from there.

Or perhaps if you already have a physical machine that you would like to turn into a VM, you can shut it down, take out the hard drive, turn it into a hard disk image file using another machine, and launch it as a VM. This way, if you have a nice server already set up, you don't need to do the work the second time.

If you are adventurous, you don't need to disassemble the original machine, you can just boot it from a USB drive and make an image of the original disk that way.

Of course, you can't use the original operating system to make an image out of itself while running. There lie madness and disk corruption.

Or do they really

Well, you clearly don't want to copy a block device byte-by-byte while it's mounted. After all, there are all these services that are running. For example, Postgres will have half-written logs that you need to close first. This problem is worse than just suddenly shutting down the machine: after all, you don't take a snapshot of the disk once; pieces that you look at first could end up being inconsistent with pieces that you look at later.

Even if you end up stopping all the services first, you would encounter issues at the file system level; just hoping that there won't be this much disk activity in the meantime is not the best strategy.

But...

... Is there a way to ensure that no one will be affecting the disk while we're doing this?

We can do this

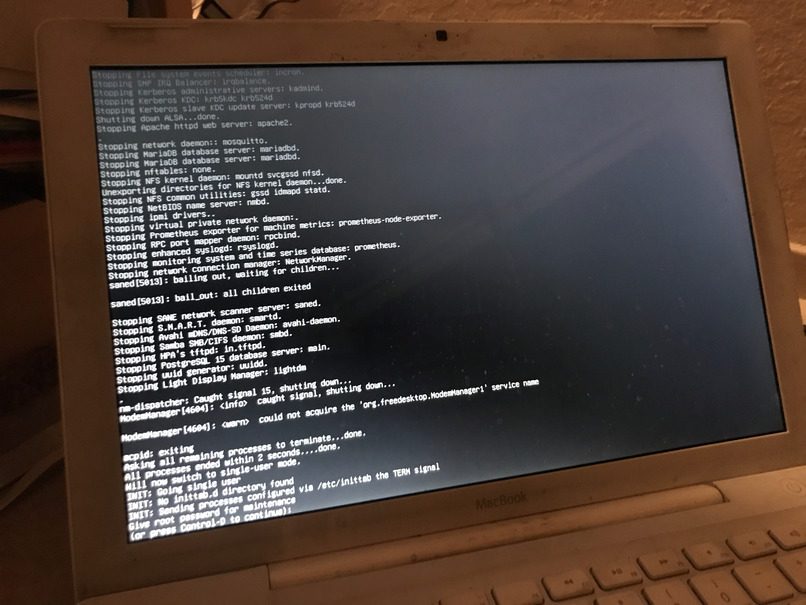

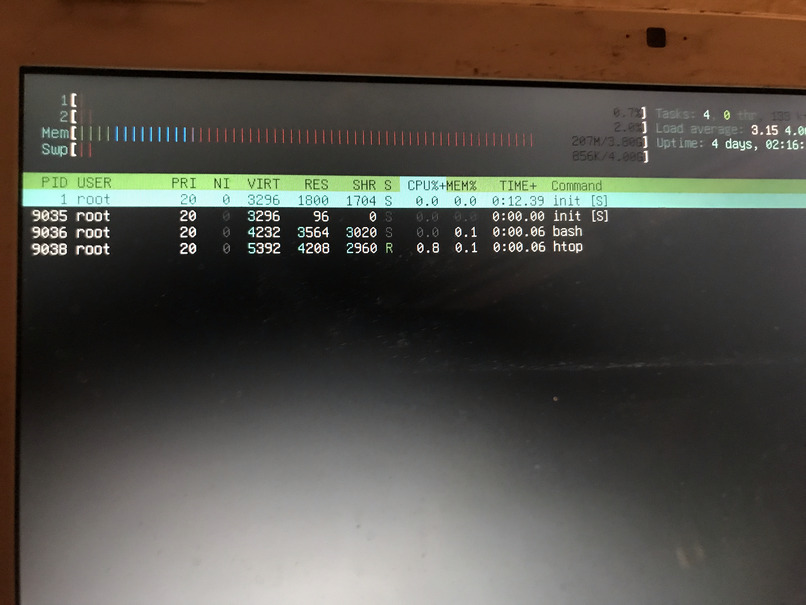

As it happens, Unix systems have a "Single User Mode". You can just say...

~ # init 1

... and have init shutdown all the services running, only leaving a single bash shell that you get in exchange for a root password. I haven't seen it being used recently (probably old Unix systems used to be prone to breakage a lot more), but it comes pretty handy now.

Meanwhile, the other side of the puzzle is just... remounting the root filesystem in read-only mode! (No one will complain about not being able to write anything... since, in single user mode, basically no one is running.) Regardless of whether it's mounted or not, we can't corrupt stuff if we don't ever write to it.

~ # mount -o remount,ro /

(Disclaimer: don't take my word on this and don't do this process to anything that's any sort of important.)

(Unless you want to.)

Where do we copy it?

There are limits to all this. Obviously, if you want to clone a file system, you need a writable target to clone it to.

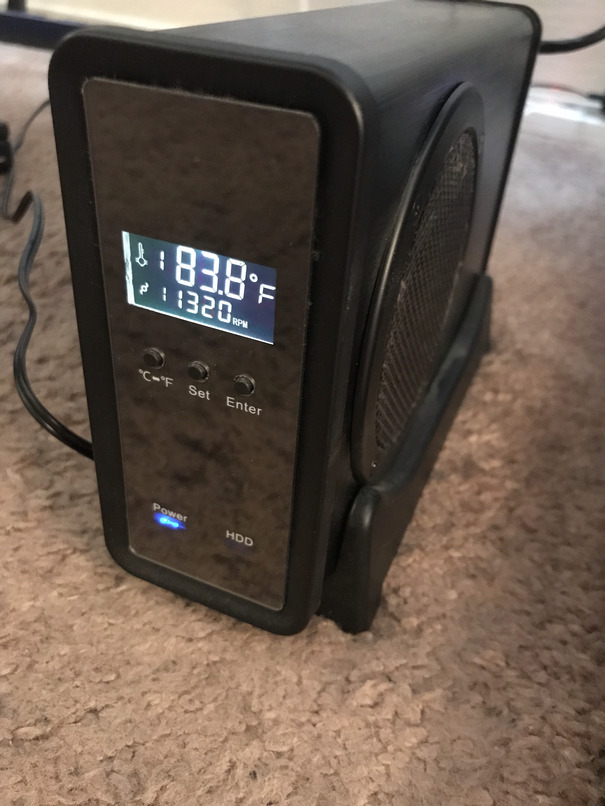

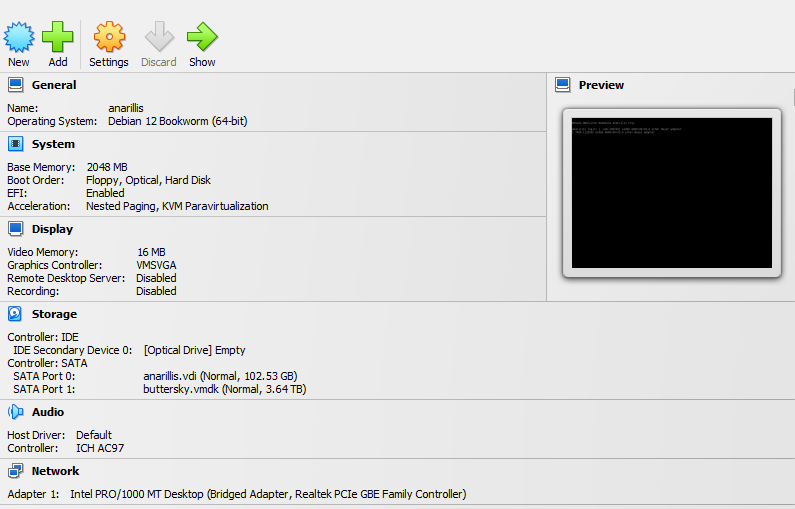

The actual system I was doing this to is my MacBook from 2007, having a 500 GB-ish system drive in it, but also serving files from an external 4TB hard drive. (It also happens to be the one that was running coordination for light switches, among other things.) We could just use this external drive as a target, but any partition would do, as long as it's not the root FS.

For extra niceness, we can turn our root directly into a VM-mountable virtual hard drive. QEMU has some nice tools to do this. (Also, despite most pre-made USB images not having QEMU on them, where we're currently operating is still our actual server, which does happen to have it installed already. Worst case is just an apt install away.)

Let's create an image that will be big enough to hold our root file system!

~$ qemu-img create -f vdi anarillis.vdi 110089077248

It doesn't particularly hurt to make it somewhat larger than we'll need; after all, it doesn't take up too much room until we write to it. (The above command will create a 400 kB image file, despite its capacity being 100 gigs.)

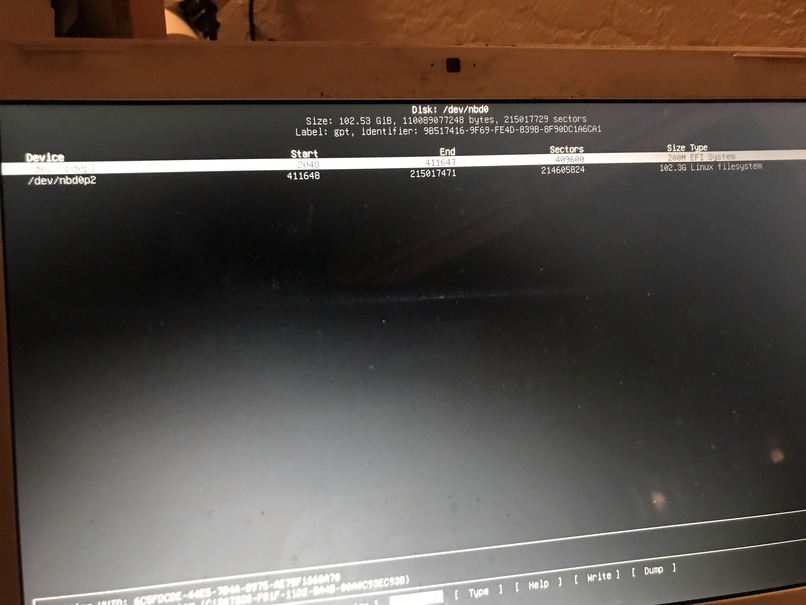

We can then have this as an actual block device available:

~# modprobe nbd

~# qemu-nbd -c /dev/nbd0 -f vdi anarillis.vdi

From here, we can just use our standard toolset, like cfdisk, to create partitions to fit the existing ones. (Unless we want to copy over the entire hard drive, which is also an option.)

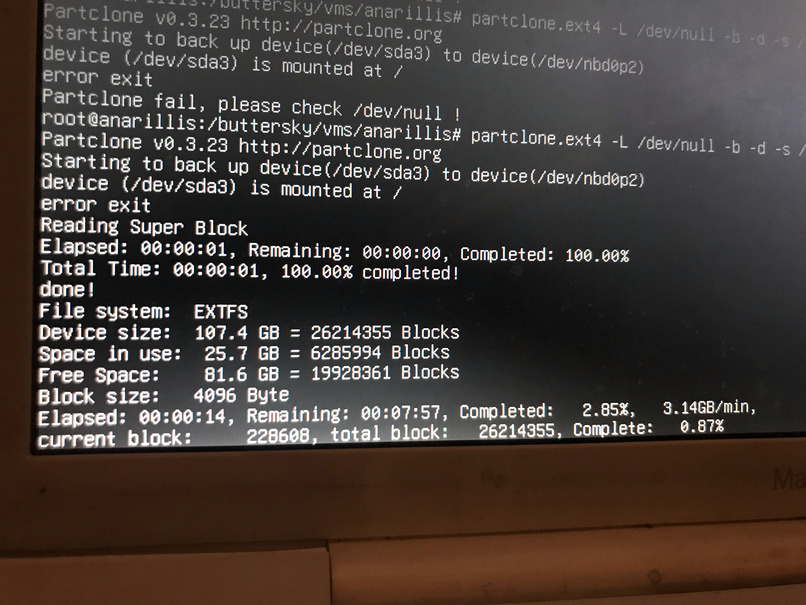

All these having been prepared, it's time now to do the actual copying. We could technically use dd to do it, but we have good reasons not to: our root file system only uses 20 gigs of space, so we don't want to pointlessly inflate our virtual disk to 100 actual physical gigs with useless zeros. Instead, we use partclone, which is smart enough to only copy parts that are being used by files. (As an added bonus, it's faster too.)

~ # init 1

~ # mount -o remount,ro /

~ # partclone.ext4 -b -d -s /dev/sda3 -o /dev/nbd0p2 -F

-b: it's a direct device to the device clone-d: for additional verbosity-s: the source device-o: the target device-F: forcingpartcloneto ignore the typically pretty relevant fact that we are trying to copy a file system that is actively in use. It also happens to ignore things like "the target file system is smaller than the source one", so use with care.

That's it, we can just shut down the original system, move the external hard drive to our new computer, and try booting the virtual hard disk.

Bootability

If the source system has been installed sometime in the past decade, there is a decent chance that it is EFI based already. In this case, you can just copy over the EFI partition to the target system and have e.g. VirtualBox to do EFI boot. It will just work.

... it might just work?

(It did not.)

First of all, VirtualBox doesn't yet know what exactly to boot, so you need to go into its "BIOS" screen and select something from the EFI partition to launch.

Also, if you copied partitions one by one, Grub might end up being somewhat confused about where things went.

grub rescue>

This being EFI though, you don't necessarily need to see each root and try to fix Grub from elsewhere. You can instead use the excellent rEFInd boot manager to directly boot Linux. You can then just fix Grub once you're in.

Adding physical disks

Remember the external drive that we cloned our read file system onto? Now it is sitting in the host machine as an actual hard drive (it's the bottom one!)

You can still attach it to the VM, too. It's slightly tricky with permissions under VirtualBox & Windows; this article has some good ideas; this one has some more. (In the end, you'll need to run both VirtualBox and its service with admin rights.)

For extra niceness you can also add the bridge networking so that the virtual machine keeps sitting on the same home network as before.

One more thing: you can even run it in the background, without showing a window, with VirtualBox.

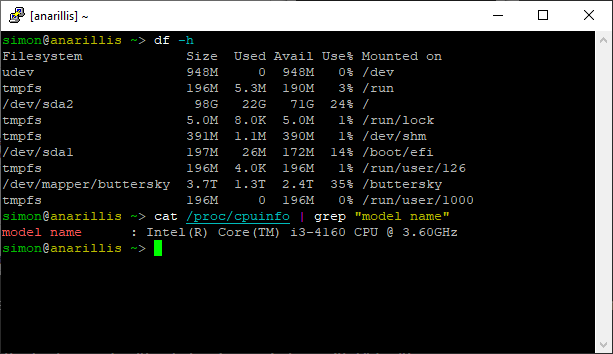

... it worked?

After all this, things can continue impressively unchanged. The machine is still on the network; you can ping it if you want. Or SSH into it. It still works as a file server (with no changes needed on the client side either, thanks to Tailscale keeping the same IP). The light switches don't speak Tailscale, but they still find it too due to mDNS name resolution also working.

We have neatly assimilated a computer into our collective, without having to figure out how to boot it from anything else but its own hard drive!

(Isn't this somewhat of an opposite to what we did in "weird OS installs"?)