Why 5G is Faster

... I don't think it's why you think it is?

(Disclaimer: I'm a software engineer, not a telecom person. Any of the below statements might be actively wrong.)

... why is this a question, even?

Isn't it obvious???

2G is from... 1991? It could do circuit-switched data at about dialup-modem speeds... which was also billed per minute, and was, thus, Really Expensive. (... for me at least, back then.)

So... many people encountered mobile internet via GPRS (also about 64 kbps, but at least you pay per byte, not per minute) or EDGE (... you still get that one these days sometimes, as a fallback, if you're in the middle of nowhere. Little "E" where "LTE" is supposed to be on the screen. It's about 400 kbps max.)

And then we get to 3G / UMTS (early smartphone era, couple of megabits), HSPA (tens of megabits), 4G (more tens of megabits... up to hundreds, even!), and, finally, 5G (Very Fast™).

Just the way we used to have computers that were really slow (286 CPUs at a couple of MHz), and this eventually evolved into the current multi-core, multi-gigahertz monsters that leave everything else in the dust. This is how technology works. It's the same with radio, right? You got fancer, um, tech stuff, which is newer, cooler, and faster? And... this is just going to continue forever!

Except... um...

Channel capacities

Enter Claude Shannon and his theorem on how many bits you can fit into a channel of a certain bandwidth and signal / noise ratio. That second article is quite short, but if you'd like a short summary: you can only fit a certain number of bits per second into a channel is a given number of MHz's wide.

Think dialup internet: it's running over phone lines, originally designed for human speech. Humans don't care too much if you filter out everything over 10 kHz; you can still understand speech quite well over a 4-5 kHz channel... so the network isn't really designed to carry much more. Accordingly, modems definitely won't do more than a couple times more than that in kilobits; 56k modems is the very best you can get out of this, assuming that lines are mostly free of noise.

In fact, DSL is faster because "human speech" is no longer what we pretend to be carrying; instead of carrying analog signals right through the entire phone network, we only need to talk to the DSL access multiplexer at the nearest telephone exchange (hopefully just a couple of hundred meters away); you can actually get a fair amount of data through a twisted pair up there.

And the same thing is, obviously, true for radio, too!

Mobile standards

2G mobile networks didn't actually have a whole lot of spectrum to work with. You can look at Wikipedia: in the US, mobile phones used frequencies around 850 MHz, while in Europe, it was 900 MHz... what they actually meant by that is a 25 MHz wide downlink (base station to phone) band and a 25 MHz wide uplink (phone to base station) band, to be shared by all phones next to a base station. And between all the carriers. While also not overlapping neighboring cells. Which, for 2G, weren't overly small. So... you can kinda see how it's hard to edge out (yeah I know, it's bad) more than a couple hundred kilobits out of this, per user, no matter how good your tech gets.

It probably wasn't such a big problem though: phone CPUs were slow, radio chips weren't that good, so even if you had all the bandwidth in the world, you wouldn't have done a whole lot better. This did change eventually though.

Accordingly, we kept adding more frequency bands. The one around 1800 MHz, still for 2G, had a lot more room. And then... enter 3G and a lot more bands... typically under 3 GHz somewhere. With LTE, we added even more.

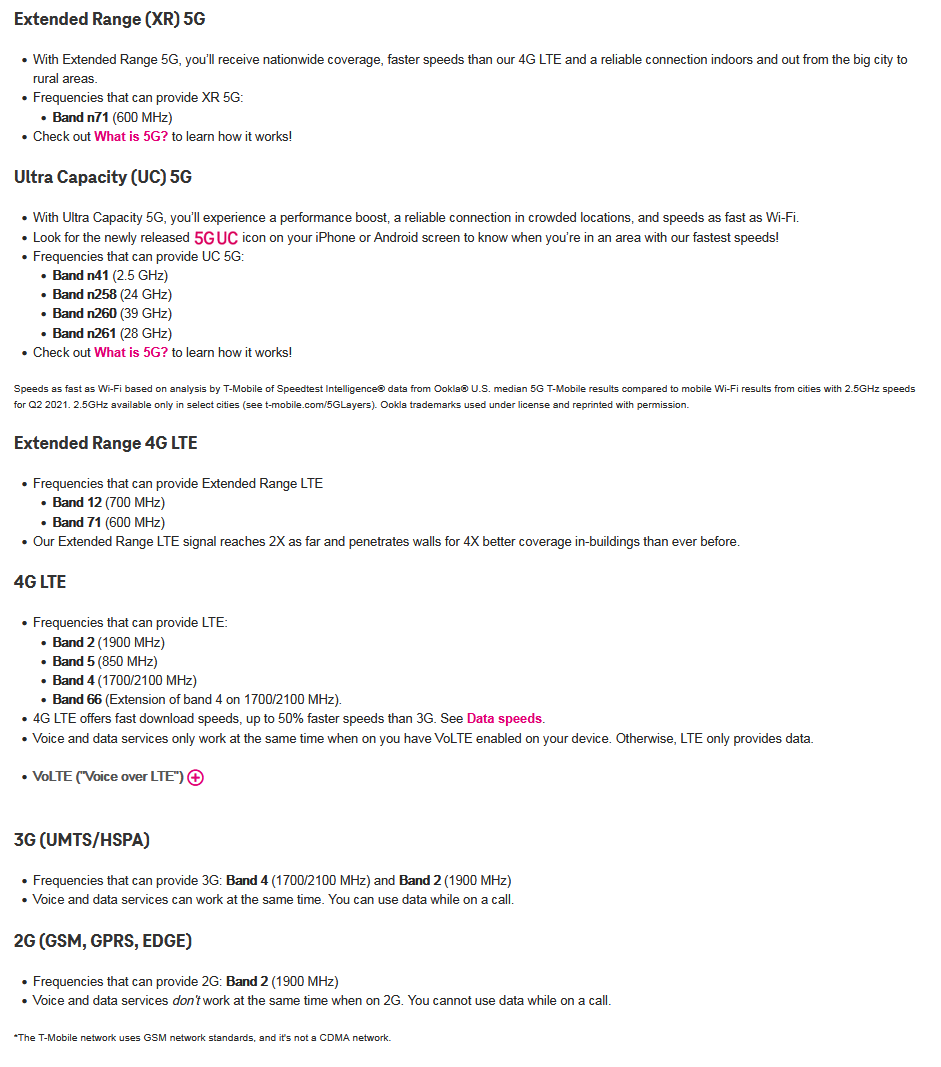

Look at, e.g., the current US T-Mobile bands (original, archive text in case they change it later):

Their 2G "band 2" is the 1900 MHz one, with an otherwise impressive 60 MHz of bandwidth both up and down. For 3G bands, they have band 4 (45 MHz around 1700 MHz) and band 2 (60 MHz around 1900). Slightly better. (... this is, by the way, just a superset: T-Mobile has to share some of this with other carriers.) Meanwhile, if you look up LTE band plans in the US... there is quite a few of them flying around with multiple tens of MHz.

So it's all about bandwidth?

As it looks like... a lot of improvements in mobile capabilities derive from the fact that we just have more bandwidth allocated to them. Part of the entire point of 5G is that it can also use >6GHz frequencies... out of which there is a lot more, and they're less likely to interfere with other base stations, too.

(... the part where you might not even see your own base station, either, is an issue they don't like to talk a lot about though.)

However, there are multiple components here, too. Using the same bands, we can now transmit signals somewhat more efficiently, thanks to new, nicer coding schemes. We can allocate spectrum to devices in smaller slices and faster, resulting in lower latencies, and, yet again, more efficient usage of the spectrum. Additionally, you can kind of get around Shannon's theorem by using multiple antennas; this is also being used. You can check out this table here, listing spectral efficiency (bits per unit bandwidth) for different mobile standards; we did go from 0.5-ish (2G) to 4-ish (4G)... there are also numbers around 30 flying around for the multi-antenna case (... of course, only if everything goes well).

Overall though, the fact that we now have a lot more spectrum than we used to have is somewhat underrated. Mobile is just more important than it used to be, and with the end of analog TV broadcast, we suddenly had more room for it, too.

What's after 5G?

(... note: this is even more speculative than the rest of the post.)

Part of the point is... 5G is better than LTE in terms of spectrum efficiency, but not by a whole lot. If we switch to 5G on the same bands, we do get some improvements... but... it's kind of incremental. The big bandwidth claims of 5G rely on >6Ghz frequencies (a.k.a. millimeter-wave); as mentioned before though, these have problems if you don't have line-of-sight to the base station.

And we can't go a lot higher, either. The higher you go, the closer you get to light-like behavior (the terahertz range is basically infrared already... also, satellite dishes, in the 10-20 GHz range, can work very well for focusing radio waves, which means that you'd get pretty bad reception behind one). So... all that remains is trying to increase coding efficiency (hard) and adding more antennas and beamforming (also not super easy). Alternatively, just add more base stations (which... is... not too cheap).

So... if I were to guess, we won't be getting extremely large increases in mobile bandwidth soon. Not ones on the order of 3G vs. LTE. In fact, carriers already have a hard job pushing all the marketing on 5G.

On the plus side, we already have a ton of it!

... comments welcome, either in email or on the (eventual) Mastodon post on Fosstodon.